Persistent Monitoring on a Budget

I am a huge fan of Justin Seitz and his blog. Last holiday season he let me know that pastebin was having a sale on it’s API access, and that he was planning on using it in a future project. He came out with that project in April with a post where he used python, the pastebin API and a self hosted search engine called searx to make a keyword persistent monitor which shot out an alert email anytime it found a “hit”.

I was a huge fan of his post and made some modifications to his code in order to fit my needs. I’m much more of a hacker than a coder so I’m sure there are more eloquent ways to achieve what I did, but it’s been meeting my needs for several months, and has had multiple relevant finds. I was recently asked for a copy of my modifications so I thought it easiest to post it on github and write up a description here.

Mod 1: Dealing with spaces in search terms.

Early on I noticed that I would have fantastic results when looking for email addresses hitting pastebin and other sites but was getting quite a few false positives on names. I tested searx and it appeared to respect quotes in searches like google. i.e. searching Matt Edmondson will return pages that contain both “Matt” and “Edmondson”, regardless of if they are together. Searching for “Matt Edmondson” forces them to be adjacent. I made a minor modification to the code in the searx section to check each search term for spaces. If the term contains spaces, it places quotes around the term before searching it in searx. This modification did indeed help reduce false positives on multi-word search terms.

Mod 2: URLs I don’t care about

While my false positives were now lowered, I was still getting results from sites that were valid hits, but that I didn’t care about. I realized that for a lot of these sites, I would likely never care about any hits on them. I made a text file list of “noise URLs” containing entries like https://www.stupidsite.com . Anytime searx found a new hit, I had it check to see if the url contained anything from my noise URL list. If it didn’t, it proceeded as normal.

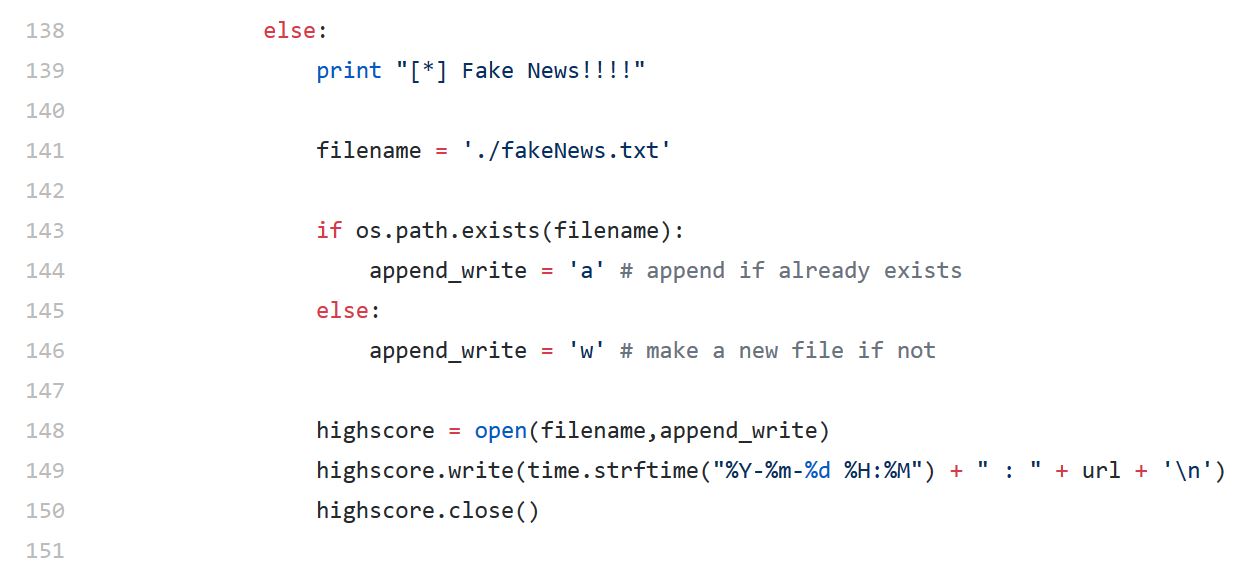

If however the searx find was in my noise URL file, the program would print “[+] Fake News!” to the screen and silently write the URL to a fake news text file instead of notifying me via email. This enabled me to reduce my noise while still having a place to go early on and see if I was ignoring anything that I shouldn’t be.

Mod 3: A picture is worth a thousand key words

Now that I was more satisfied with my signal/noise ratio, I decided to make the triage of notification emails more efficient by not just sending me the links to pages that contained my terms, but actually send me a picture of the page as well. This was easy to do, but did come at a cost.

I used PhatomJS to accomplish this task. Whenever the program found a hit in searx or on pastebin, the code would openup a PhantomJS browser, visit the URL, take a screenshot, and save it to a directory so that it could later be attached to my notification emails.

This provided a huge increase in my triaging speed since I didn’t necessarily have to visit the site, just look at a picture. It was also nice a few times when the sites causing my alerts were 3rd party sites which had been hacked and contained malware.

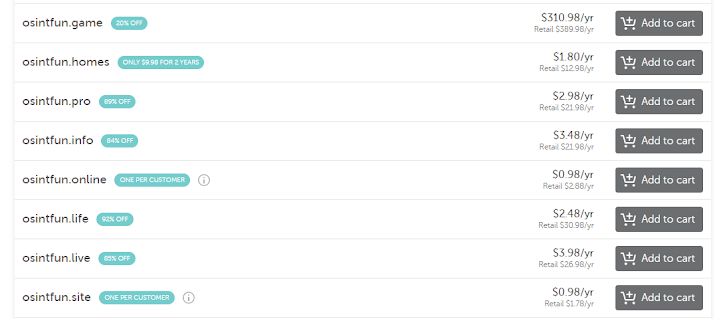

One negative with this was the increase in requirements needed on the system since PhatomJS needs quite a bit more RAM than a normal python script does. If you have this running on a physical system that you control, this is likely a non issue since the specs needed are still modest. If you’re using a provider like digital ocean however, I found that I needed to go from the $5 a month box to the $20 a month box before I achieved the “running for weeks unattended” stability that I desired.

Mod 4: Email Tweaks

The first tweak I made to the email section was an unbelievably minor one to allow for alerts to be sent to multiple email addresses instead of just one. I then had to modify the format of the email slightly to go from a plain text message, to a message with attachments.

As you can see in the code above, I have the send email function attach anything in the ./images subfolder (up to five items) and then delete everything in the folder so it’s clean for the next alert. The reason I limited it to five attachments was that it’s possible to get an email with a dozen or more alerts and if the pages are large, the screenshots will be large as well.

Trying to process a large number sizeable of attachments can cause the program to hang and affect my precious stability. Capping the number of attachments at five seemed like a good compromise since it allowed me to get screenshots 99% of the time and occasionally having to actually go click on a link like a barbarian 🙂

The next time I make mods to this, I’ll likely move all of the images to a cold storage directory which I’ll delete every week with a cron job. That way in those 1% cases where I lack a screenshot, I’ll still have one in the cold storage folder.

Once again, a HUGE hap tip to Justin Seitz. This would absolutely not exist in this form without him. I didn’t even know that searx was a thing until he introduced me to it.

Comments

Post a Comment