Incorporating Facial Recognition into Your OSINT

Between multiple accounts, nicknames and fake names, an individual performing OSINT will often find themselves looking at two pictures and wondering, “Is this the same person?” Sometimes it’s obvious that it is or isn’t the same individual, but it’s not always easy. When uncertainty is high, it would be fantastic to have an unbiased algorithm examine the faces and give a similarity score. I set off to see if I could configure a generic “black box” solution that I could point at a directory of images, and have it tell me which ones were of the same individual.

The first thing I needed to find was a robust facial recognition solution. I found OpenFace (http://cmusatyalab.github.io/openface/) which is an open source facial recognition engine developed at Carnegie Mellon. Its python based and uses several external libraries to detect the face, adjust it for comparison and utilizes deep neural networks for analysis. That’s worth 10 points in buzzword bingo!!

Since there are so many dependencies involved, the OpenFace developers strongly recommend utilizing the docker image that they created. That seemed to fit perfectly with the modular black box end goal I was after so that’s exactly what I did. I downloaded the latest community edition of docker for windows and installed it on a laptop I utilize for OSINT. Make sure you run the installer as admin and after a logoff and a restart, you should be good to go. You can verify this by opening up an administrative command prompt and typing “docker version”.

I then pulled down the OpenFace image with a docker pull bamos/openface command. Once I had that in place I made a “docker” directory on my c:\ drive and made three sub directories underneath it, group1, group2 & output.

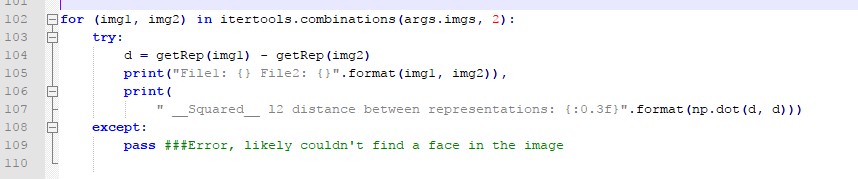

I then modified the docker settings to share the c:\ drive with docker images. With those in place, it was then time to make a few modifications to the docker image. First, I made some slight changes to the /root/openface/demos/compare.py file. The mods accomplished two things.

- Added in a bit of error handling (unmodified it would error and exit if it couldn’t find a face in a picture).

- Made the text output easier to parse.

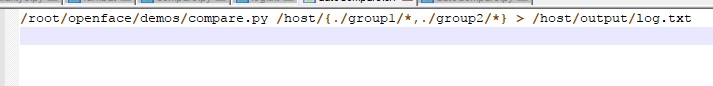

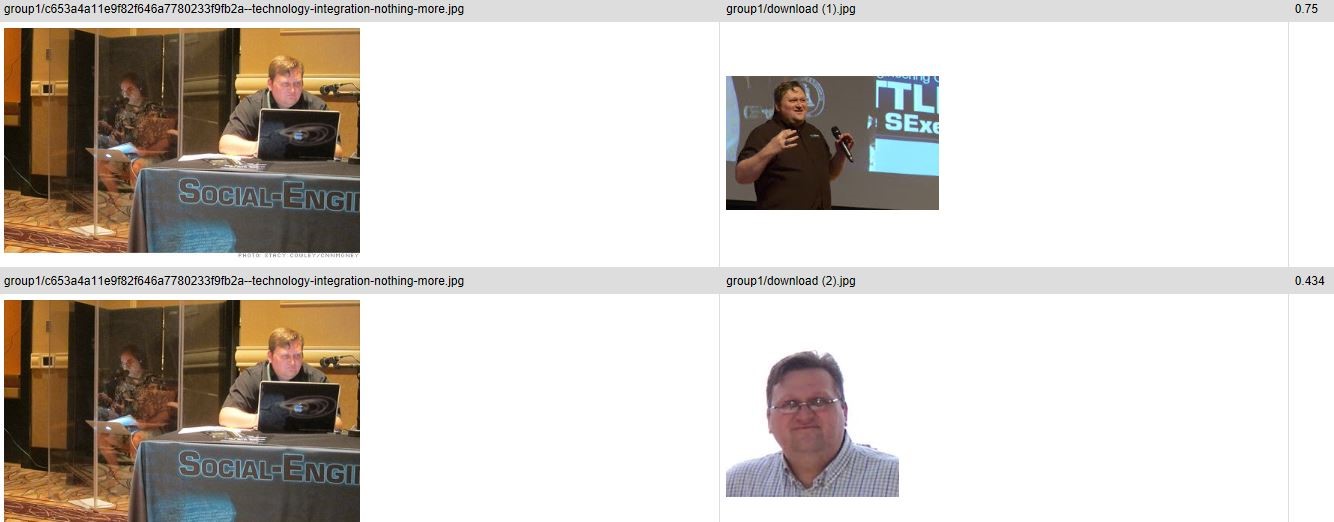

Now that we have our modular black box in place, we can test it with a simple python script that compares the images in the group folders and generates a HTML report. NOTE: The compare.py requires there to be at least one image in the group2 directory to function properly. I put a picture of Trump in my group2 directory and all the pictures I wanted analyzed in group 1. If you modify the compare script to only use group 1, the output will be unnecessarily long as it will compare images against themselves as well as other images.

The python script can be viewed at https://pastebin.com/8uxqNv7z .

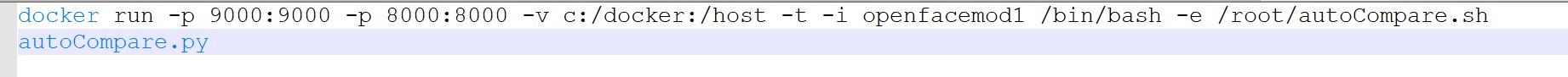

The final step is to create a two line batch file that has the docker image analyze the photos, then runs our python script on the results.

The end result is the raw log.txt files with all the results in the output directory and a HTML report in the docker directory with all photos which had a score beneath 1.0 from the algorithm (identical photos will score 0). I used world famous hacker/social engineer/model Chris Hadnagy for my tests.

While this is a very simplistic example of how we can use facial recognition to help us with our OSINT efforts, the modular nature of the modified docker image gives us the ability to easily incorporate this capability into our web crawlers and scrapers looking for matches.

Comments

Post a Comment