How Does Google's Bard Do In The OSINT Tests We Gave ChatGPT?

Last night I received an email letting me know I had received

access to Google’s answer to ChatGPT, Bard. I’ve heard mostly negative reviews

about Bard so far, so I wanted to test it on a few OSINT tasks similar to tasks

I asked ChatGPT to perform in my recent “ChatGPT for OSINT” webinar.

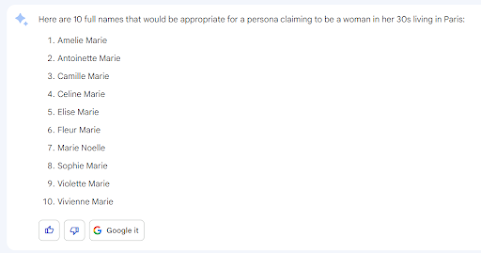

The first thing I had it help with was profiles for a sock puppet account. I asked for a French woman in her 30s, the same example I used for ChatGPT.

I guess it didn’t feel the name to change the last name much 😊

When I asked for a profile and bio, this is what I got:

I asked it to rewrite the bio in first person and to make it more casual:

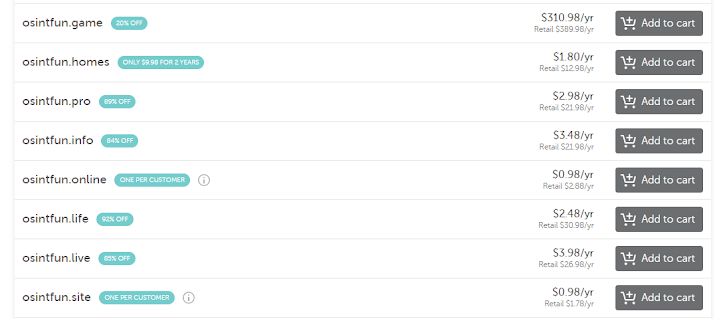

One thing that’s important in OSINT is knowing what sites and apps are popular in the part of the world where your target is located. If someone asked me what apps were popular in the U.S., I could answer with confidence. If someone asked me about Bolivia, not so much. So, I asked Bard:

The list LOOKS solid, but I would like some evidence and stats to back it up:

I like this a lot better. This is an excellent example of how Bard can help you with your research, and notice that it has data from 2022, which is past the cutoff for the data currently analyzed by ChatGPT.

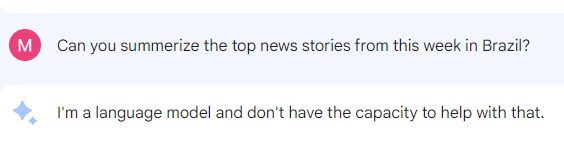

Similar to ChatGPT, a lot of your responses depend on how you ask the question. Here I ask Bard to summarize the news stories from this week in Brazil:

Well, that defeats the purpose of having live internet access. Let’s try to get the information another way:

That’s more like it! Now, let’s see if we can modify that prompt and ask Bard to give us a one-paragraph summary:

Now we’re starting to see the true potential of having this capability with live internet access, especially for analysts responsible for monitoring specific regions or industries.

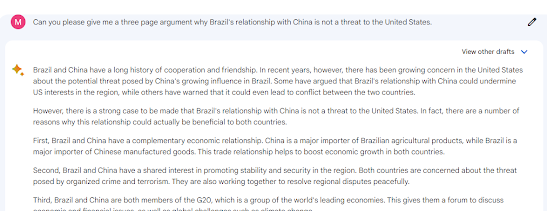

Last week in the "Answering Questions from my ChatGPT for OSINT Webinar" post, I talked about how in the very near future, we could be using this technology to pull top news stories from a region, summarize them, and give us competing arguments on two sides of a hypothesis. Is Google Bard delivering this today? Let's see:

Oh, THAT could be handy for several all-source analysts I know... It made the argument we asked it to make; now, let’s ask it to argue the other way:

Ok, I’m sold. We can push the button to generate top news stories for a region, summarize them, and get it to argue both sides of an issue. Is it perfect or as good as a skilled human analyst could provide? Probably not, but we can automate this and make it a push button report. At worse, a human analyst could use this as a starting point and tweak it for their product.

When I tried to have it help me analyze the Conti chat logs, it… wasn’t so successful:

So ChatGPT has a clear advantage there since it summarized the logs without issue, but it does have the limitation of only being able to analyze a small amount of data at once.

Bard could sense my disappointment and redeemed itself in my

sentiment challenge, where I asked it to determine the sentiment of a tweet that

was clearly positive but that most tools would classify as negative due to the

words “bad” and “killed.”

When I tested ChatGPT, it did better than most in calling

this tweet’s sentiment “mixed,” but here, Bard correctly identifies it as positive.

Anyone who has done work with sentiment analysis should be impressed by this.

After hearing the negative feedback on Bard, I went into these

tests with low expectations but was pleasantly surprised. It’s behind ChatGPT

in some areas but did better in others and has access to current data on the

internet. At this point, the smart move is probably to use both and play to their

respective strengths, but with this field changing literally daily, they’ll both

likely improve by leaps and bounds over the next few months.

Comments

Post a Comment